motyl.dev Weekly #4: Week 13 (Mar 23 – Mar 29, 2026)

A curated digest of what the community found worth reading this week.

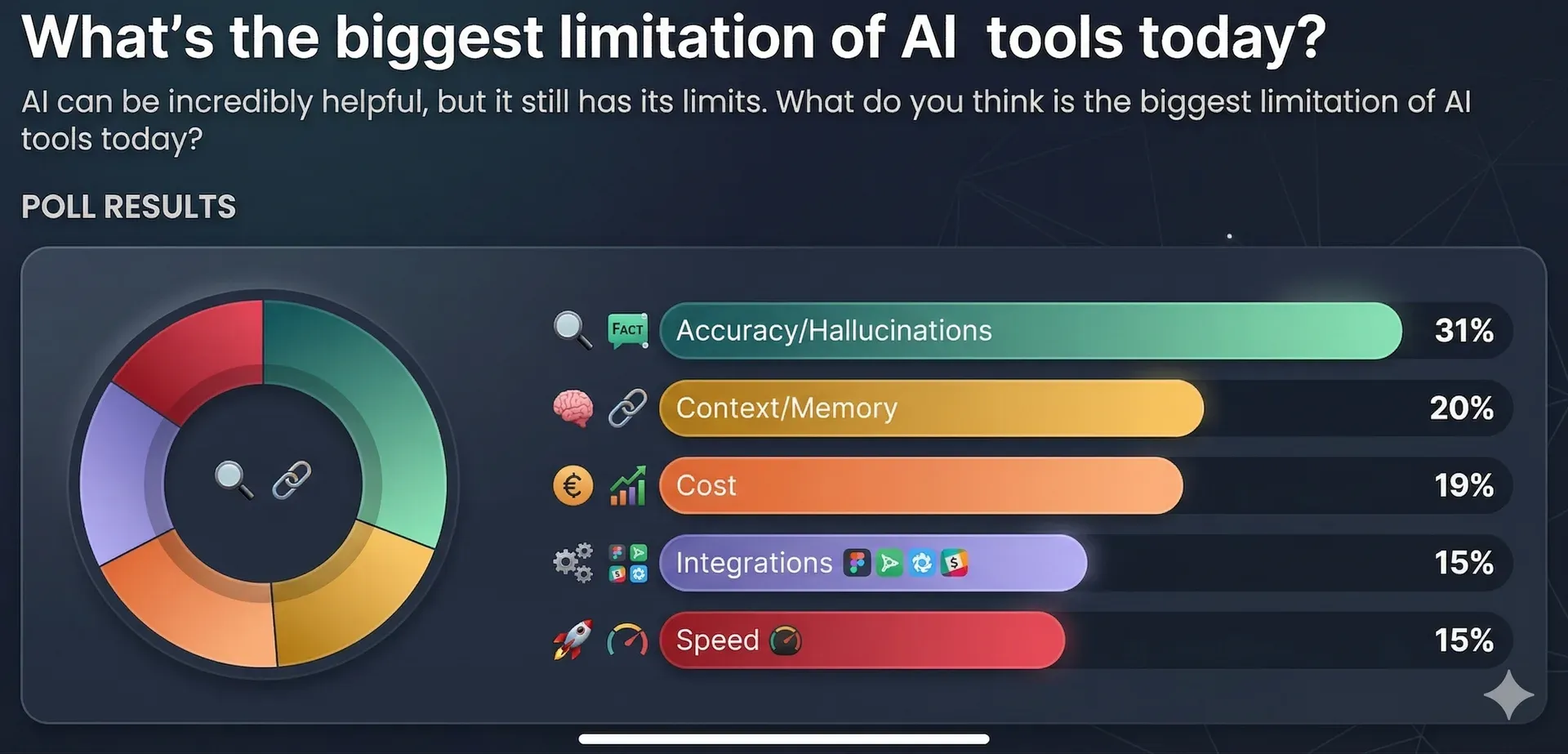

This week, HackerNoon released some interesting survey results. It turns out that the majority of respondents (myself included) believe that hallucinations and accuracy are currently the biggest limitations of AI tools. I have a feeling that this is a constraint of the current technology as a whole, not just specific tools. I’m torn between being worried and quietly relieved, because, well... it’s the reason I still have a job in IT! :)

I know from talking to colleagues that many developers are worried about job stability. Personally, I think the work has actually become more interesting because we can delegate many tedious tasks to agents. Above all, there is still—and hopefully will be for a long time—a need for a "human in the loop" (I actually wrote an article about this a while back).

Also in this newsletter issue:

- A great section on coding

- Exciting updates in Next.js 16.2.

- As always, plenty of content regarding the AI ;)

Enjoy the read!

AI

From 12 Agents to 1: AI Agent Architecture Decision Guide A practical guide to simplifying agent architectures. The temptation to decompose every task into a swarm of specialized agents is real, but this piece argues that most teams are better served by fewer, more capable agents with clear responsibilities. Worth reading before you over-engineer your next agentic workflow.

Grok 4.20: Four AI Agents That Argue Before Answering You xAI takes the opposite approach — four specialized agents (Captain, Researcher, Analyst, Contrarian) debate internally before producing a final answer. The result: a 65% drop in hallucination rate compared to Grok 4.1. The "Contrarian" role, whose entire job is to disagree, is the design choice that makes the whole thing work. MIT research backs the pattern, showing accuracy jumps from 70% to 95% with multi-agent debate.

Google Gemini vs Anthropic Claude vs OpenAI ChatGPT vs xAI Grok: The Ultimate Comparison A comprehensive 2026 LLM comparison that concludes the wars are no longer about raw capability — all four models are competitive at the frontier. The differentiation now is trust, ecosystem, and governance. Claude leads on enterprise trust with an ad-free commitment, Gemini dominates ecosystem integration, ChatGPT retains the broadest user base but shows cracks from ads and Pentagon contracts, and Grok has a real-time data moat but serious governance challenges.

Coding

Next.js 16.2: AI Improvements The companion post to the general 16.2 release focuses on agent-facing features. Dev startup is roughly 87% faster, rendering is 25-60% faster through a React core optimization that eliminates costly C++/JS boundary crossings in V8. Server Functions now log execution in the terminal, and hydration mismatches finally get proper diff indicators.

Error.isError(): A Better Way to Check Error Types in JavaScript

The new Error.isError() static method fixes two long-standing issues with instanceof Error: cross-realm errors from iframes or workers returning false, and fake errors manipulating the prototype chain to pass checks. It uses an internal marker instead of prototype checking. Supported in Chrome, Edge, and Firefox — not yet in Safari.

TermUI: TypeScript terminal UI framework A TypeScript framework for building rich terminal user interfaces. If you've been eyeing Ink or Blessed but wanted something more TypeScript-native, this is worth a look.

Frontend

How we built a Linear coding agent: the hard parts Daily.dev's deep dive into building Huginn, a coding agent that lives inside Linear and automates the full workflow from ticket to PR. The hard parts weren't prompting — they were wrapping Claude Code and Codex as child processes with undocumented streaming formats, and building a three-tier fallback parser for structured LLM output. This is the kind of engineering post that separates demos from production systems.

Start naming your useEffect functions, you will thank me later

A simple but effective practice: give your useEffect callbacks named functions instead of anonymous arrows. It makes stack traces readable, debugging easier, and communicates intent at a glance. Small habit, real payoff.

Tools

Storybook MCP for React A Model Context Protocol server that gives AI coding agents awareness of your component library — stories, props, API docs, usage examples. Instead of generating duplicate components, the agent can reference and reuse what already exists. Also embeds live story previews in chat UIs. If you maintain a design system and use AI-assisted development, this directly addresses the component duplication problem.

Productivity

Blog2Video on GitHub An open-source tool that converts blog posts into video content. Useful for repurposing written content into a format that reaches a different audience.

Other

Poll - What's the biggest limitation of AI tools today? HackerNoon's community poll on current AI tool limitations. Cast your vote and see where the developer community stands on what needs fixing most.

Curated by Grzegorz Motyl. Subscribe for weekly updates.